From Idea to Intelligence: Your Journey into AI Automation and Building LLM Apps

Picture this: It’s Monday morning, and your startup team is drowning in support tickets while simultaneously trying to “add AI” to your product roadmap because, well, everyone else is doing it. Sound familiar?

Meanwhile, your competitor just launched an AI-powered feature that actually makes sense, and you’re wondering how they managed to crack the code while you’re still figuring out where to start.

Here’s the thing AI automation: build LLM apps isn’t just another tech buzzword. It’s the bridge between those repetitive tasks eating your team’s time and the intelligent systems that can handle them seamlessly. Today, we’re going to explore what LLM apps really are, why automation powered by language models is different from everything that came before, and most importantly, how you can start building solutions that actually deliver value. Ready to turn those late night “there has to be a better way” moments into your competitive advantage?

Table of Contents

What Exactly Is “AI Automation” Today?

Let’s clear the air here. When I say AI automation in 2025, I’m not talking about those rigid robotic process automation (RPA) tools your enterprise neighbors have been using for years. You know, the ones that break the moment someone moves a button on a webpage?

AI automation today is fundamentally different. It’s powered by large language models that can understand context, interpret natural language, and make intelligent decisions on the fly. Think of traditional automation as following a recipe to the letter if step 3 says “add salt” but there’s no salt, the whole process crashes. LLM-powered automation? It reads the situation, understands you’re making soup, and suggests pepper instead.

This isn’t about replacing scripts and workflows entirely. It’s about adding a brain to the process. The LLM becomes the decision-maker that can handle ambiguity, while traditional automation tools handle the execution. It’s the “brains + hands” combination that makes modern AI automation so powerful and honestly, so much more reliable than the brittle systems we’ve dealt with before.

LLM Apps: The Engine Behind Modern Automation

So what exactly are LLM apps? Simply put, they’re applications that leverage large language models like GPT, Claude, LLaMA, or Gemini as their core intelligence layer. But here’s where it gets interesting these aren’t just chatbots (though chatbots can be LLM apps).

We’re talking about document processors that can understand contracts in plain English, workflow bots that can interpret your team’s Slack messages and trigger the right actions, summarization tools that actually grasp the nuance in your meeting notes, and code assistants that understand what you’re trying to build, not just what you’ve written.

The magic of LLM apps lies in their flexibility. Traditional software requires you to anticipate every possible input and code a response for it. LLM apps? They work with natural language and can adapt to situations you never explicitly programmed for. It’s like having a really smart intern who can figure things out instead of a rigid robot that only knows one way to do things.

Why Build LLM Apps for Automation Instead of Plugging Pre-Made Tools?

Look, I get it. There are tons of AI SaaS tools out there promising to solve all your problems with a simple integration. So why would you want to build your own LLM apps?

Here’s the reality: off-the-shelf solutions are great for generic use cases, but they often fall short when you need something that truly fits your workflow. Custom LLM apps give you control over the entire experience. You can tailor the prompts, integrate with your specific data sources, and create workflows that actually match how your team operates.

Then there’s the privacy factor. With your own LLM app, you control where your data goes and how it’s processed. No more wondering if that AI tool is using your sensitive customer information to train their next model.

Cost efficiency matters too, especially as you scale. Those per-user, per-interaction pricing models can get expensive fast. When you build your own solution, you’re typically paying for API calls and hosting costs that scale more predictably with your actual usage.

But here’s the strategic angle that really matters: building LLM apps isn’t just about solving today’s problems. It’s about creating a competitive advantage. While your competitors are limited by what their SaaS vendors decide to build, you’re creating exactly what your users need.

Core Use Cases That Actually Deliver Value

Let me paint some pictures for you, because honestly, the best way to understand LLM apps is to see them in action.

Customer Support Automation: Imagine a support system that doesn’t just route tickets based on keywords, but actually understands what the customer is really asking for. It reads the frustration in a message about a “broken feature” and escalates appropriately, while confidently handling routine questions about password resets and account settings.

Document Summarization & Contract Review: Picture your legal team receiving a 40-page partnership agreement and getting an intelligent summary that highlights unusual clauses, potential red flags, and key terms all in minutes, not hours. The LLM doesn’t just extract text; it understands legal context and business implications.

Code Review Assistants: Think about a system that reviews pull requests not just for syntax errors, but for architectural decisions, security vulnerabilities, and maintainability concerns. It understands your codebase’s patterns and can suggest improvements that align with your team’s standards.

Marketing Content Automation: Envision generating product descriptions, social media posts, and email campaigns that maintain your brand voice while adapting to different audiences and platforms. The LLM learns your tone and creates content that actually sounds like your team wrote it.

Internal Workflow Copilots: Consider an AI assistant that knows your company’s processes well enough to help new employees navigate complex approval workflows, answer questions about policies, and even draft the right emails to the right people when something needs to happen.

Each of these scenarios shares something crucial: they’re not just automating tasks, they’re automating intelligence.

Tools & Frameworks That Make It Possible

The good news? You don’t need to build everything from scratch. The LLM app development ecosystem has matured significantly, and there’s a growing stack of tools that work beautifully together.

LangChain serves as your orchestration layer, helping you chain together different AI models, data sources, and tools into coherent workflows. Think of it as the conductor of your AI orchestra.

LlamaIndex specializes in connecting LLMs to your data, making it easy to build systems that can reason over your documents, databases, and knowledge bases. It’s particularly powerful for retrieval-augmented generation (RAG) applications.

AutoGen takes multi-agent systems to the next level, letting you create teams of AI agents that can collaborate on complex tasks. It’s like having multiple specialists working together on your automation challenges.

For the infrastructure layer, you’ve got Pinecone for vector databases, Hugging Face for model hosting and fine-tuning, and the various LLM APIs like OpenAI, Anthropic, and Google that power the intelligence.

Don’t forget the no-code and low-code options either. Zapier AI actions, Make.com, and Retool are democratizing LLM app development, letting you build sophisticated automations without diving deep into code.

The key is thinking of these as a cohesive stack rather than isolated tools. They’re designed to work together, creating a development environment where building LLM apps feels more like assembling components than writing everything from scratch.

High-Level Steps to Build Your First LLM Automation

Ready to get your hands dirty? Here’s your practical roadmap no PhD in machine learning required.

Step 1: Define Your Task Clearly. What specific problem are you solving? “Make our customer service better” is too vague. “Automatically categorize and route support tickets while providing suggested responses for common issues” is actionable.

Step 2: Pick Your LLM Model. Start with GPT-4 or Claude for complex reasoning tasks, or consider smaller models like LLaMA for simpler, cost-sensitive applications. Don’t overthink this you can always switch later.

Step 3: Set Up Your Data Flow. How will information get to your LLM and where will the outputs go? This might involve API connections to your CRM, database queries, or webhook integrations with your existing tools.

Step 4: Design Prompts and Templates. This is where the magic happens. Craft clear, specific prompts that consistently produce the outputs you need. Include examples and constraints to guide the model’s behavior.

Step 5: Deploy and Monitor. Start small with a pilot group, monitor the results carefully, and iterate based on real usage. Set up logging to catch hallucinations or unexpected outputs before they reach users.

Quick warnings from the trenches: budget for prompt engineering time (it takes longer than you think), watch your token costs carefully, and always have a human review process for high-stakes decisions. Trust me, learning these lessons early will save you headaches later.

Challenges You’ll Face (and How to Beat Them)

Let’s be real building LLM apps isn’t all sunshine and perfectly generated responses. You’re going to hit some bumps, but they’re entirely manageable if you know what’s coming.

Hallucinations and Trust Issues are probably your biggest concern. LLMs sometimes sound confident while being completely wrong. The solution? Implement retrieval-augmented generation (RAG) to ground your model’s responses in factual data, and always design with verification steps for critical decisions.

Scaling Costs can sneak up on you fast. Those API calls add up, especially with larger models. Consider using smaller models for simpler tasks, implement intelligent caching to avoid redundant requests, and set up monitoring to catch runaway processes before they drain your budget.

Security and Compliance matter more than ever when you’re processing sensitive data through AI models. Design with data filters, implement proper access controls, and consider on-premise or private cloud deployments for highly sensitive applications.

Here’s something I’ve learned: start conservative and scale up your trust gradually. It’s much easier to expand an LLM app’s responsibilities than to fix problems caused by giving it too much autonomy too early.

Many developers worry about getting flagged for AI-generated content, which brings up interesting questions about (why is my writing gets flagged as AI) This same principle applies to LLM apps transparency about AI involvement often works better than trying to hide it.

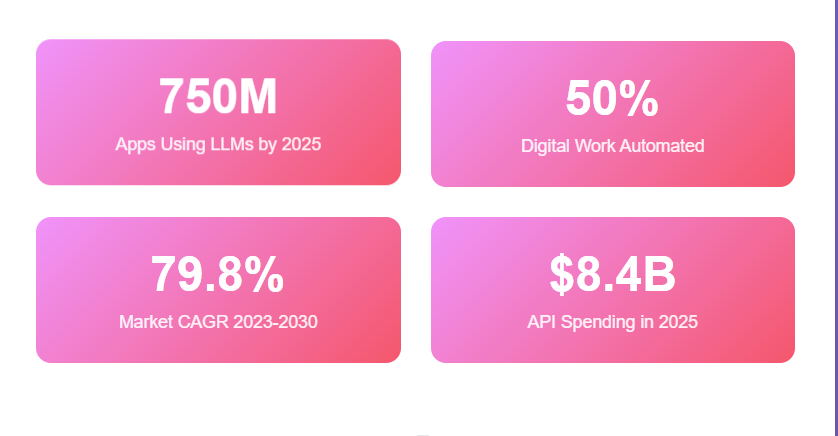

The Future: AI Automation: build LLM apps

The trajectory we’re on is fascinating. Multi-agent systems are moving from research papers to production deployments, where teams of specialized AI agents collaborate on complex workflows. Imagine your customer service, sales, and technical teams all powered by AI agents that can hand off tasks to each other seamlessly.

Autonomous workflows are getting smarter too. We’re moving toward systems that can handle entire business processes with minimal human intervention, while still maintaining appropriate oversight and control mechanisms.

The industry is also standardizing around human-in-the-loop governance patterns. The future isn’t about replacing human judgment entirely it’s about amplifying human capabilities and ensuring AI systems remain aligned with business goals and ethical standards.

Companies are starting to develop standardized LLM app stacks, much like how web development standardized around certain frameworks and patterns. Early movers who understand these patterns will have a significant advantage as the field matures.

And speaking of characters and personalities in AI, if you’re interested in creating more engaging AI interactions, you might find insights in (how to make a good character AI bot) that apply to user-facing LLM applications.

Conclusion

Here’s what we’ve covered: AI automation: build LLM apps represents a fundamental shift from rigid, rule-based automation to intelligent, adaptable systems that can understand context and make decisions. LLM apps turn automation from a brittle set of scripts into a flexible, reasoning-powered solution that grows with your needs.

The best part? You don’t need to be an AI research scientist to start building. The tools exist, the frameworks are mature, and the biggest barrier is often just taking that first step to identify a specific workflow you want to improve.

My advice? Start small, think big, and don’t wait for the perfect moment. Pick one repetitive task that’s been bugging your team, sketch out how an LLM app might handle it, and experiment with tools like LangChain or even Retool to build a proof of concept.

The future belongs to teams that can blend human creativity with AI capabilities seamlessly. The question isn’t whether you’ll eventually build LLM apps it’s whether you’ll be ahead of the curve or playing catch-up. What workflow will you automate first?

Do I need to be a machine learning expert to build LLM apps?

Not at all. With frameworks like LangChain and tools like Retool, you can build functional LLM apps using existing APIs and pre-built components. Focus on understanding your workflow needs rather than the underlying ML theory.

How much does it cost to run an LLM app?

Costs vary widely based on usage, but expect to pay per API call (typically $0.002-$0.06 per 1K tokens). A customer service bot handling 100 conversations daily might cost $50-200/month, depending on complexity and model choice.

What’s the difference between LLM apps and regular chatbots?

Traditional chatbots follow scripted decision trees and break when users ask unexpected questions. LLM apps understand natural language context and can handle novel situations by reasoning through problems rather than just matching keywords.

How do I prevent my LLM app from giving wrong information?

Use retrieval-augmented generation (RAG) to ground responses in your actual data, implement confidence scoring, and always include human review for high-stakes decisions. Start with low-risk tasks and gradually expand as you build trust.